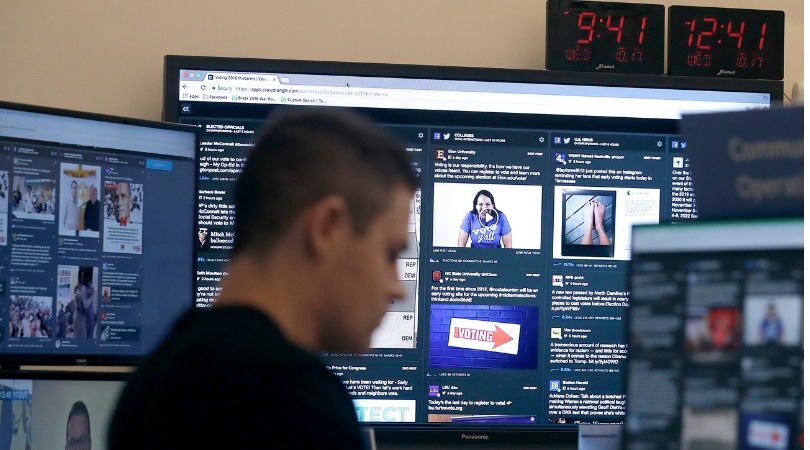

Facebook said it blocked 115 accounts for suspected “coordinated inauthentic behavior” linked to foreign groups attempting to interfere in Tuesday’s U.S. midterm elections. The social media company shut down 30 Facebook accounts and 85 Instagram accounts and is investigating them in more detail, it said in a blog post late Monday.

Videos by Rare

Facebook acted after being tipped off Sunday by U.S. law enforcement officials. Authorities notified the company about recently discovered online activity “they believe may be linked to foreign entities,” Facebook’s head of cybersecurity policy, Nathaniel Gleicher, wrote in the post.

U.S. tech companies have stepped up their work against disinformation campaigns, aiming to stymie online troublemakers’ efforts to divide voters and discredit democracy. Facebook’s purge is part of countermeasures to prevent abuses like those used by Russian groups two years ago to sway public opinion ahead of the 2016 U.S. presidential election.

The company based in Menlo Park, California, has been somewhat regularly disclosing such purges in recent months, most recently in October. More are likely going forward since, even as its systems get better at detecting and removing malicious accounts, the bad actors are sharpening their attacks, too. Gleicher said Facebook will provide an update once it learns more, including whether the blocked accounts are linked to the Russia-based Internet Research Agency or other foreign entities.

An election update about removing additional Facebook and Instagram accounts: https://t.co/Ld983jFkJ4

— Meta (@Meta) November 6, 2018

Almost all of the Facebook pages associated with the blocked accounts appeared to be in French or Russian. The Instagram accounts were mostly in English and were focused either on celebrities or political debate. No further details were given about the accounts or suspicious activity.

Also on Monday, Facebook acknowledged that it didn’t do enough to prevent its services from being used to incite violence and spread hate in Myanmar. Alex Warofka, a product policy manager, said in a blog post that Facebook “can and should do more” to protect human rights and ensure it isn’t used to foment division and spread offline violence in the country.

Last month, Facebook removed 82 pages, accounts and groups tied to Iran and aimed at stirring up strife in the U.S. and the U.K. It carried out an even broader sweep in August, removing 652 pages, groups and accounts linked to Russia and Iran.

Today we took down multiple Pages, groups and accounts that originated in Iran for engaging in coordinated inauthentic behavior on FB and IG. Find out more: https://t.co/C2ukHdBCXq

— Meta (@Meta) October 26, 2018

Twitter, meanwhile, has said it has identified more than 4,600 accounts and 10 million tweets, mostly affiliated with the Internet Research Agency, which was linked to foreign meddling in U.S. elections, including the presidential vote of 2016. The agency, a Russian troll farm, has been indicted by U.S. Special Counsel Robert Mueller for its actions during the 2016 vote.

Facebook, Twitter and other companies have been fighting misinformation and election meddling on their services for the past two years. There are signs they’re making headway, although they’re still a very long way from winning the war.

Facebook, in particular, has reversed its stance of late 2016, when CEO Mark Zuckerberg dismissed as “pretty crazy” the notion that fake news on his service could have swayed the presidential election.

In July, for instance, the company said that it’s spending on security and content moderation, coupled with other business shifts, would hinder its growth and profitability. Investors expressed their displeasure by knocking $119 billion off Facebook’s market value.

One problem is that it’s not just agents from Russia and other nations who are intent on sharing misinformation and propaganda. There is plenty of homegrown fake news too, whether in the U.S. or elsewhere.

Still, Facebook is seeing some payoff, and not just with the accounts it has been able to find and take down. A recent research collaboration between New York University and Stanford found that user “interactions” with fake news stories on Facebook, which rose substantially in 2016 during the presidential campaign, fell significantly between the end of 2016 and July 2018. On Twitter, however, the sharing of such stories continued to rise over the past two years.